Codex in the Bay: Inside the Rise of Agentic AI Workflows

George Pickett Gathers Codex Enthusiasts to Demo and Predict the Direction of Agentic AI

On Jan. 13, 2026, George Pickett, self-taught engineer and Codex community builder, gathered Codex enthusiasts from around the Bay Area to demo how they use Codex and offer their predictions on the direction of agentic AI.

Pickett’s free event, posted to Luma, gathered so many people (with more than 370 RSVPs) that he had to find a larger venue. WorkOS, a modern API platform, offered to host, providing pizza and beverages to guests in their office building off Market Street.

The mood was light as engineers shared their excitement and mingled around the room. Pickett kicked off the event by asking everyone to participate in the polling interface he created in Codex. He smiled and noted that it was good to see the poll in action because it was hard to test as an individual person. The room chuckled.

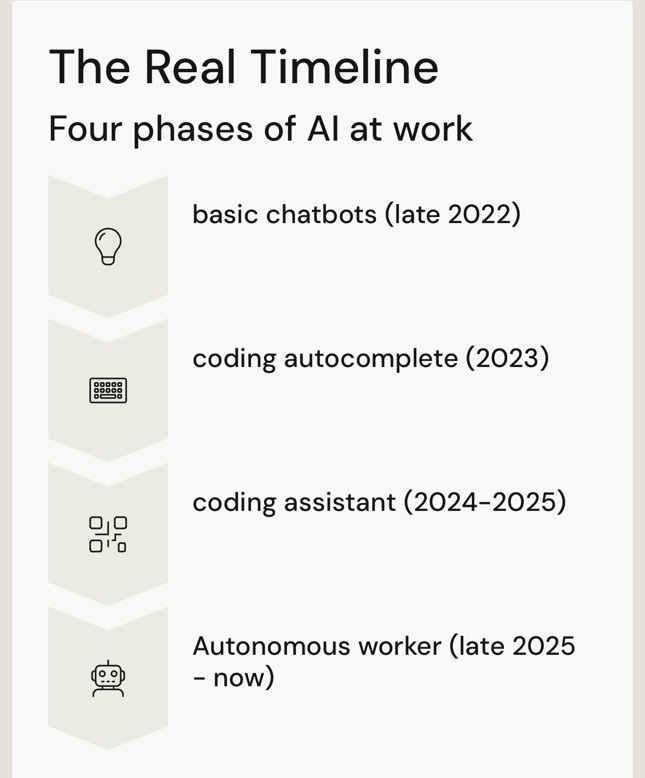

Codex as a Labor Force

Pickett explained how last summer he’d been using Claude Code and recently dove into Codex, citing its models as the best thus far. He described the evolution of AI in coding up to this point: in late 2022, basic chatbots; in 2023, coding autocomplete; in 2024-25, coding assistants; and in late 2025, autonomous workers.

Pickett predicted that AI will become more of a coworker than an assistant over time, and there will be an evolution from planning to memory to tools to autonomy in AI. According to Pickett, this is how Codex becomes “a labor force.”

He elaborated on his process in Codex: first, he uses Wispr Flow to dictate, then the PLANS.md turns that into an EXECPLAN.md that the agent uses to build and stay on track. The EXECPLAN.md is like a job description for Codex and can instruct to hold more memory so that it can debug itself. Pickett uses the journal/ as institutional memory and PLANS.md as a process guide.

You can access codex-ralph, built by Pickett, to “turn Codex into a durable, long-running teammate: point it at any repo, give it a living ExecPlan, and let it chip away at the work safely and methodically.”

Pickett predicted that in the future, agents will break scale. The room laughed aloud at this one. Pickett went on to describe how he foresees AI deleting important files, losing work, corrupting projects, and infusing silent features to humankind’s frustration. This drastically increases the value of version control and Docker containers.

There are three things that AI agents lack, according to Pickett: authentication across services (this is one that Y Combinator-backed startup, Multifactor, is solving for), unified tool access, and safe execution boundaries.

“Things are evolving so rapidly, the ecosystem has lots of architectures and tools to choose from, so best practices are in flux,” said Pickett, “patterns for storing and accessing skills, using MCPs, and managing permissions aren’t yet agreed upon.”

According to Pickett, the future interfaces of AI will resemble unified agent inboxes with questions, blockers, results, and other pertinent information. Largely, he believes that you will just be able to instruct an agent and let it run, working alongside you like a coworker more than an assistant.

I Want to Write Some Code

Dominik Kundel, Developer Experience at OpenAI, presented his workflow in Codex. He is the kind of guy who is well-known at for carrying his laptop around the office, instructing and waiting for Codex to carry out his whims throughout the day.

“I use the Slack, GitHub and Linear integrations to punt distractions,” shared Kundel post event on X, “if someone flags an issue I first tag Codex in the thread/issue to have it take an initial stab.” When Kundel is on the go, he uses the Codex tab in the ChatGPT iOS app to start a task.

Kundel’s “relatively vanilla local setup" includes the following experimental features:

web_search_request → let Codex access recent data (this removes the need for using an MCP for most docs)

unified_exec → have Codex be able to run background terminals

shell_snapshot → to speed up terminal commands

For planning larger features, Kundel uses an $.exec-plan “skill” that he created by asking $.skill-creator to convert his Exec Plan article into a skill. Then, the skill writes the plan to a file and Codex implements it. You can learn more by reading Aaron Friel’s Using PLANS.md for Multi-Hour Problem Solving in OpenAI’s Cookbook.

Kundel doesn’t spend too much time on prompting; instructing Codex to create “pretty” interfaces using ShadCN and Tailwind. He noted that screenshots are great for frontend in Codex.

To cut down on time spent repeatedly copying into the repository, Kundel emphasizes creating skills, which can also reduce token usage. “Codex can review its own documentation at this point,” said Kundel, his enthusiasm for Codex’s ability to debug itself was apparent, “Codex wrote itself a skill that evaluates whether it coheres, and it rewrites paragraphs.”

To speed up Codex, Kundel creates many project-level skills that share common process across his team. For example he uses a $.docs-editor skill that uses http://vale.sh for linting, in addition to style guides. The skills are generated using $.skill-creator, often after Codex does the work for the first time. He also combines the $.skill-creator with a planning skill for even more complex skills.

After his demo, Kundel took several questions from the audience:

Q: “Is the CLI better than the web tool?”

A: “No, I [Kundel] use all of them.”

Q: “What reasoning level do you use?”

A: “Medium for when it’s an easy task and I am very involved, or extra high when it is complex work and I am walking away or planning.”

Q: “Does using Skills save tokens?”

A: “Yes, skills are repetitive actions that save tokens.”Q: “Have you experienced context compacting?”

A: “Yes, compaction is built in when you get to a maximum.”

Amongst questions, Kundel expressed a jovial enthusiasm, “I’ve reached a point where I want to write some code,” a refreshing take that has been expressed on X by many engineers lately: that currently, agentic AI runs tedious tasks that makes coding easier and building more fun.

Kundel’s last note encouraged users to utilize Claude to review or /review to debug, and write code to test its own bugs and theories. You can read more intricate details on Kundel’s workflow at his overview on X.

Vibe Building Beyond Human Comprehension

Danial Hasan introduced how his startup, TrySquad, is focused on the coordination of agents. TrySquad is the first AI organization for autonomous engineering. “If it’s verifiable, it can be automated,” said Hasan. “Not all things are automatically verifiable, and your job is to make them verifiable.”

He begins his projects by providing Codex with the mission, context, and desired end state. He specified that you cannot only use markdown for this, you must also have it in code. He sends Codex on the mission with tests as deliverables.

As of now, he verifies the code himself, though he predicted in the future that verifying code would not involve reviewing every line.

“A lot of files are being created,” said Hasan. “How do you organize them?” Hasan described starting with daily session directories, one per working session, where documentation, research artifacts, and other intellectual byproducts are stored. This keeps exploratory work from polluting the production codebase, similar to how a math student might use daily scratchpads while solving problems.

Hasan described skills in Codex as behavior contracts: rules that define how an agent behaves, which tools it may use, and how it pursues a given goal. He embeds skills within skills to compound agent effectiveness and free up human time for creative work.

Separately, Hasan emphasized the importance of canonical logging: a structured approach to logging that captures meaningful slices of system behavior rather than overly granular events. Canonical logs make it possible for agents to reconstruct the full picture of what happened in production, allowing issues to be diagnosed without inspecting every line of code.

TrySquad focuses heavily on dependency mapping: the process of determining which steps must be completed before others in order to safely parallelize work. Hasan described this as a prerequisite to delegation: agents cannot be assigned tasks in parallel until the dependency graph is understood.

To support parallel execution, Hasan uses GitHub worktrees to give each agent an isolated copy of the codebase. This prevents agents from interfering with one another’s changes and makes conflicts explicit and easier to resolve during merges.

Hasan joked that while OpenAI excels at post-training, that “Claude … has a soul,” the room erupted with laughter, “and is better with tokens.” He recommended Codex for teams that want to minimize long-term technical debt, even if it requires more upfront time.

Hasan’s most notable prediction was that we will begin to “vibe build beyond human comprehension,” not because understanding no longer matters, but because no single human can fully grasp systems of that scale.

“As our systems grow beyond individual comprehension,” Hasan said, “we’ll need systems that ensure our agents understand every part of the system, at both high and low levels, better than any human. That’s what we’re building at TrySquad.”

Agents Running in Parallel

Adel Ahmadyan presented Agentastic Dev as a solution for running multiple coding agents in parallel on the same repository, without them interfering with one another. Agentastic Dev is a terminal-first IDE designed to run coding agents such as Codex and Claude Code concurrently, with built-in editing, diffing, and code review.

Running modern coding agents, Codex in particular, can take 30 minutes to an hour for complex tasks. During that time, developers are often idle. Ahmadyan argued that if agents are doing long-running work, developer productivity improves dramatically when those agents can run in parallel. The challenge is that multiple agents operating on the same codebase will conflict unless they are properly isolated.

Agentastic Dev solves this by giving each agent its own isolated snapshot of the repository. This is achieved using Git worktrees to isolate git state, often combined with containers to isolate runtime environments and permissions. Each agent works in its own worktree, allowing multiple features or experiments to proceed simultaneously without stepping on one another’s changes. Developers can quickly switch between worktrees while agents wait for additional input.

Ahmadyan also described testing workflows that emerge once parallel execution becomes frictionless. Developers often send the same task to multiple agents, sometimes even across different models and select the best result. This effectively turns agent usage into a pass@k strategy, increasing the likelihood of a strong first solution.

Cost and observability were recurring themes. Ahmadyan agreed with Hasan that Codex’s subscription model makes costs more predictable, compared to usage-based billing that can spike unexpectedly when agents run unchecked. He also highlighted that Agentastic Dev includes built-in diff viewers and review agents that operate under the same subscription.

“Review agents run out of budget quickly versus more budget for CLI agents, and you can see when it’s done rather than checking GitHub over and over,” Ahmadyan said.

One emerging practice he noted is cross-model review: using Codex to review Claude-generated code and vice versa, since different models tend to catch different classes of issues.

Learn More About Codex SF

Moving forward, Pickett’s Codex SF community will host a monthly speaker-focused event and a weekly Codex Builders Night. Subscribe to the Codex SF community’s Luma page to RSVP to the next builder night, and to keep your eye out for upcoming events.

Article written by Melissa Leavenworth

Checked for AP Style by Associated Press Stylebook GPT

Thanks for the well-written overview!